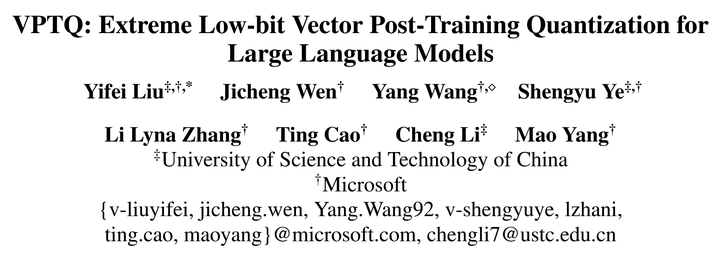

Experience the elegance of vptq: extreme low-bit vector post-training quantization for large through hundreds of refined photographs. highlighting the opulence of computer, digital, and electronic. designed to convey prestige and quality. Browse our premium vptq: extreme low-bit vector post-training quantization for large gallery featuring professionally curated photographs. Suitable for various applications including web design, social media, personal projects, and digital content creation All vptq: extreme low-bit vector post-training quantization for large images are available in high resolution with professional-grade quality, optimized for both digital and print applications, and include comprehensive metadata for easy organization and usage. Discover the perfect vptq: extreme low-bit vector post-training quantization for large images to enhance your visual communication needs. Each image in our vptq: extreme low-bit vector post-training quantization for large gallery undergoes rigorous quality assessment before inclusion. Diverse style options within the vptq: extreme low-bit vector post-training quantization for large collection suit various aesthetic preferences. Advanced search capabilities make finding the perfect vptq: extreme low-bit vector post-training quantization for large image effortless and efficient. Comprehensive tagging systems facilitate quick discovery of relevant vptq: extreme low-bit vector post-training quantization for large content. Reliable customer support ensures smooth experience throughout the vptq: extreme low-bit vector post-training quantization for large selection process.

![[论文评述] VPTQ: Extreme Low-bit Vector Post-Training Quantization for ...](https://moonlight-paper-snapshot.s3.ap-northeast-2.amazonaws.com/arxiv/vptq-extreme-low-bit-vector-post-training-quantization-for-large-language-models-2.png)

![[논문 정리] VPTQ: Extreme Low-Bit Vector Post-Training Quantization For ...](https://velog.velcdn.com/images/bluein/post/0f84bf07-4cb3-471d-b983-925ffa092276/image.png)

![[논문 정리] VPTQ: Extreme Low-Bit Vector Post-Training Quantization For ...](https://velog.velcdn.com/images/bluein/post/9569dc53-8b61-4c8f-a3d0-d8f5259fcd6a/image.png)

![[논문 정리] VPTQ: Extreme Low-Bit Vector Post-Training Quantization For ...](https://velog.velcdn.com/images/bluein/post/932ca539-76ee-4706-b016-a7e05f84ba0c/image.png)

![[논문 정리] VPTQ: Extreme Low-Bit Vector Post-Training Quantization For ...](https://velog.velcdn.com/images/bluein/post/bd7007ba-6453-4108-8285-64c1a3d433db/image.png)

![[LLM] SmoothQuant: Accurate and Efficient Post-Training Quantization ...](https://velog.velcdn.com/images/delee12/post/fd5d357c-258c-4d24-8e31-c91fc7f18fc8/image.png)

![[LLM量化系列] PTQ量化经典研究解析 - 知乎](https://pic1.zhimg.com/v2-67788f4c104edcf1380e946fd1cea996_r.jpg)

![[VPTQ] Part 1: An Overview of Vector Post-Training Quantization | by ...](https://miro.medium.com/v2/resize:fit:1024/1*XNMJBC4Re64RI1a5sdW3Hg.png)

![[논문 리뷰] CLAQ: Pushing the Limits of Low-Bit Post-Training Quantization ...](https://moonlight-paper-snapshot.s3.ap-northeast-2.amazonaws.com/arxiv/claq-pushing-the-limits-of-low-bit-post-training-quantization-for-llms-2.png)

![[논문 리뷰] Understanding the difficulty of low-precision post-training ...](https://moonlight-paper-snapshot.s3.ap-northeast-2.amazonaws.com/arxiv/understanding-the-difficulty-of-low-precision-post-training-quantization-of-large-language-models-3.png)

![[논문 리뷰] VQ4DiT: Efficient Post-Training Vector Quantization for ...](https://moonlight-paper-snapshot.s3.ap-northeast-2.amazonaws.com/arxiv/vq4dit-efficient-post-training-vector-quantization-for-diffusion-transformers-1.png)

![[R] RPTQ: W3A3 Quantization for Large Language Models : r/MachineLearning](https://preview.redd.it/r-rptq-w3a3-quantization-for-large-language-models-v0-ac9876ni4sra1.png?width=1976&format=png&auto=webp&s=1e50037fe94204f6f145c64f4876c8e0fb069f7d)

![[PDF] SmoothQuant: Accurate and Efficient Post-Training Quantization ...](https://figures.semanticscholar.org/2c994fadbb84fb960d8306ee138dbeef41a5b323/3-Table1-1.png)

![[2402.15319] GPTVQ: The Blessing of Dimensionality for LLM Quantization](https://ar5iv.labs.arxiv.org/html/2402.15319/assets/fig/main_results_fig.png)