Llm Int8 Quantization

Immerse yourself in the artistic beauty of Llm Int8 Quantization through hundreds of inspiring images. merging documentary precision with creative imagination and aesthetic beauty. transforming ordinary subjects into extraordinary visual experiences. Browse our premium Llm Int8 Quantization gallery featuring professionally curated photographs. Ideal for artistic projects, creative designs, digital art, and innovative visual expressions All Llm Int8 Quantization images are available in high resolution with professional-grade quality, optimized for both digital and print applications, and include comprehensive metadata for easy organization and usage. Each Llm Int8 Quantization image offers fresh perspectives that enhance creative projects and visual storytelling. Advanced search capabilities make finding the perfect Llm Int8 Quantization image effortless and efficient. Diverse style options within the Llm Int8 Quantization collection suit various aesthetic preferences. Each image in our Llm Int8 Quantization gallery undergoes rigorous quality assessment before inclusion. Reliable customer support ensures smooth experience throughout the Llm Int8 Quantization selection process. Professional licensing options accommodate both commercial and educational usage requirements. The Llm Int8 Quantization archive serves professionals, educators, and creatives across diverse industries. Our Llm Int8 Quantization database continuously expands with fresh, relevant content from skilled photographers. Comprehensive tagging systems facilitate quick discovery of relevant Llm Int8 Quantization content.

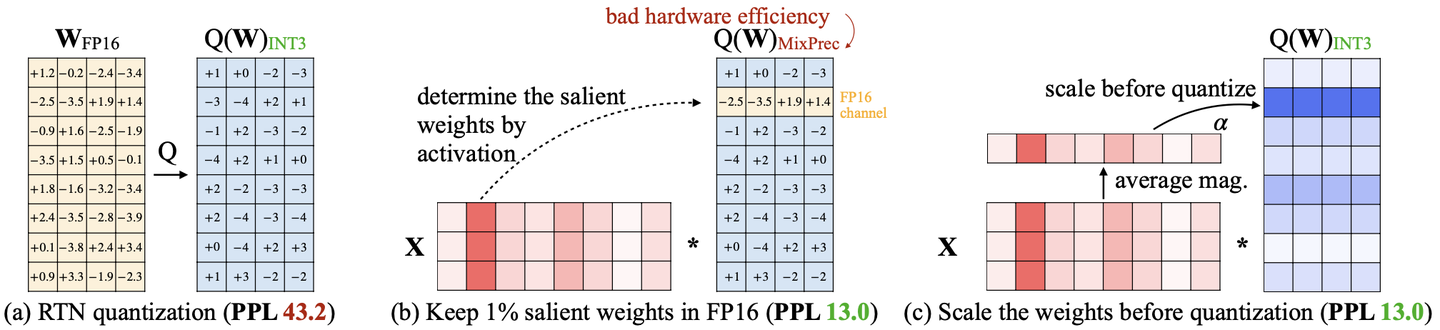

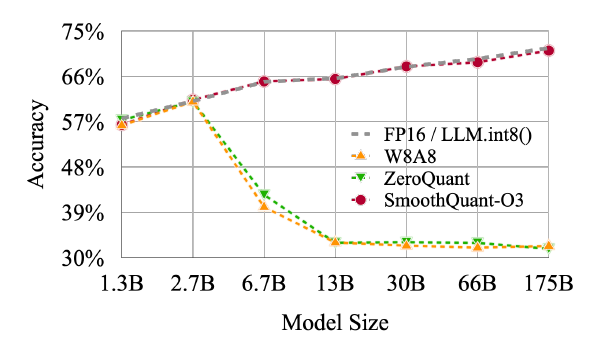

![[R] SmoothQuant: Accurate and Efficient Post-Training Quantization for ...](https://preview.redd.it/r-smoothquant-accurate-and-efficient-post-training-v0-ijsl5xu0id1a1.jpg?width=878&format=pjpg&auto=webp&s=cf6641fe9b281d3d3a2cf8b542a714df15fc767d)

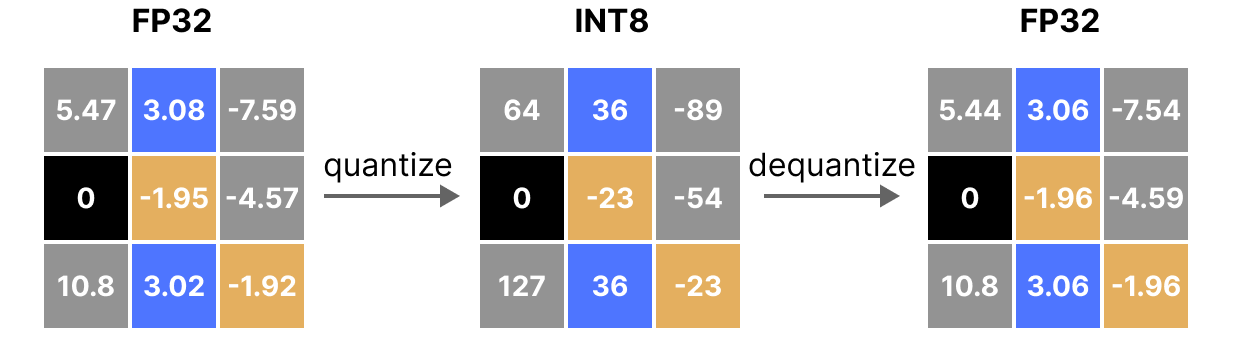

![[LLM] SmoothQuant: Accurate and Efficient Post-Training Quantization ...](https://velog.velcdn.com/images/delee12/post/a20f0553-486f-44a0-8ee2-44224cd6a281/image.png)

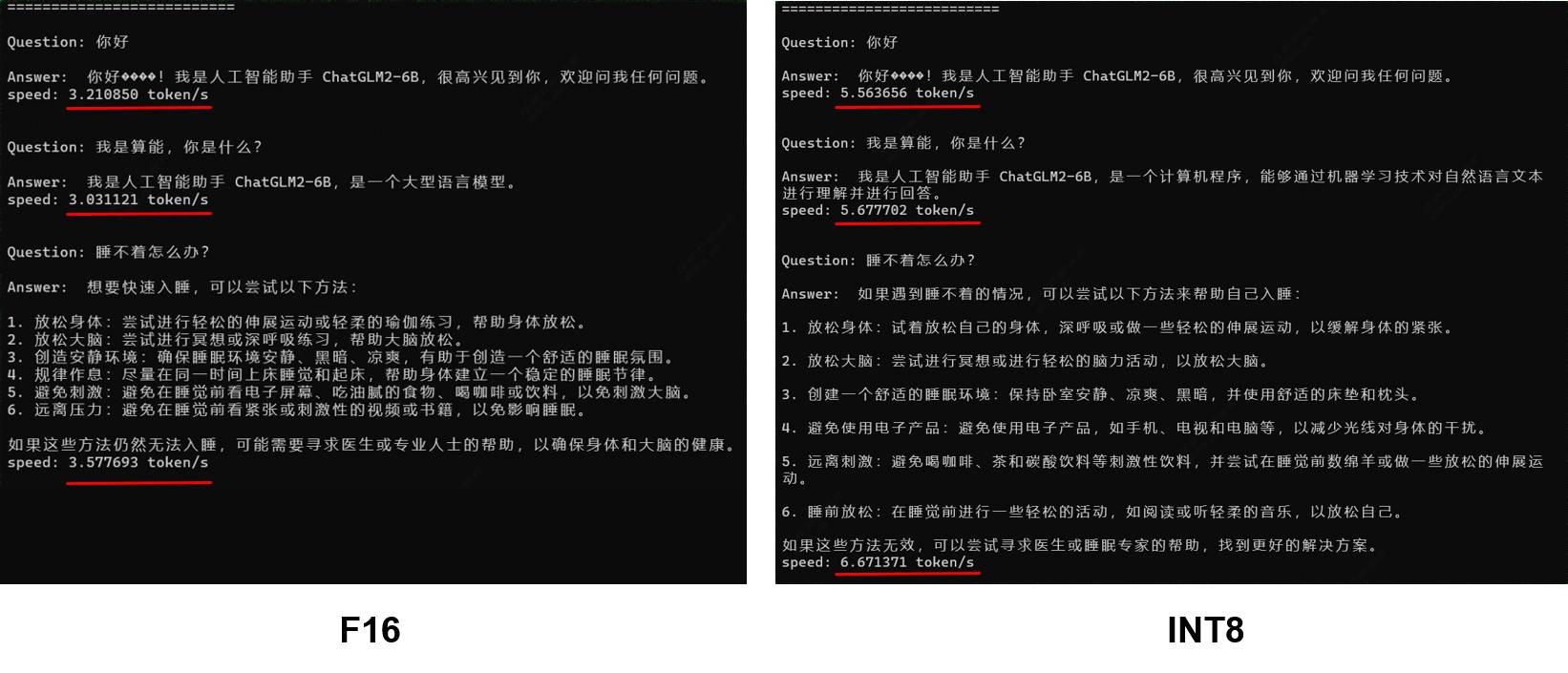

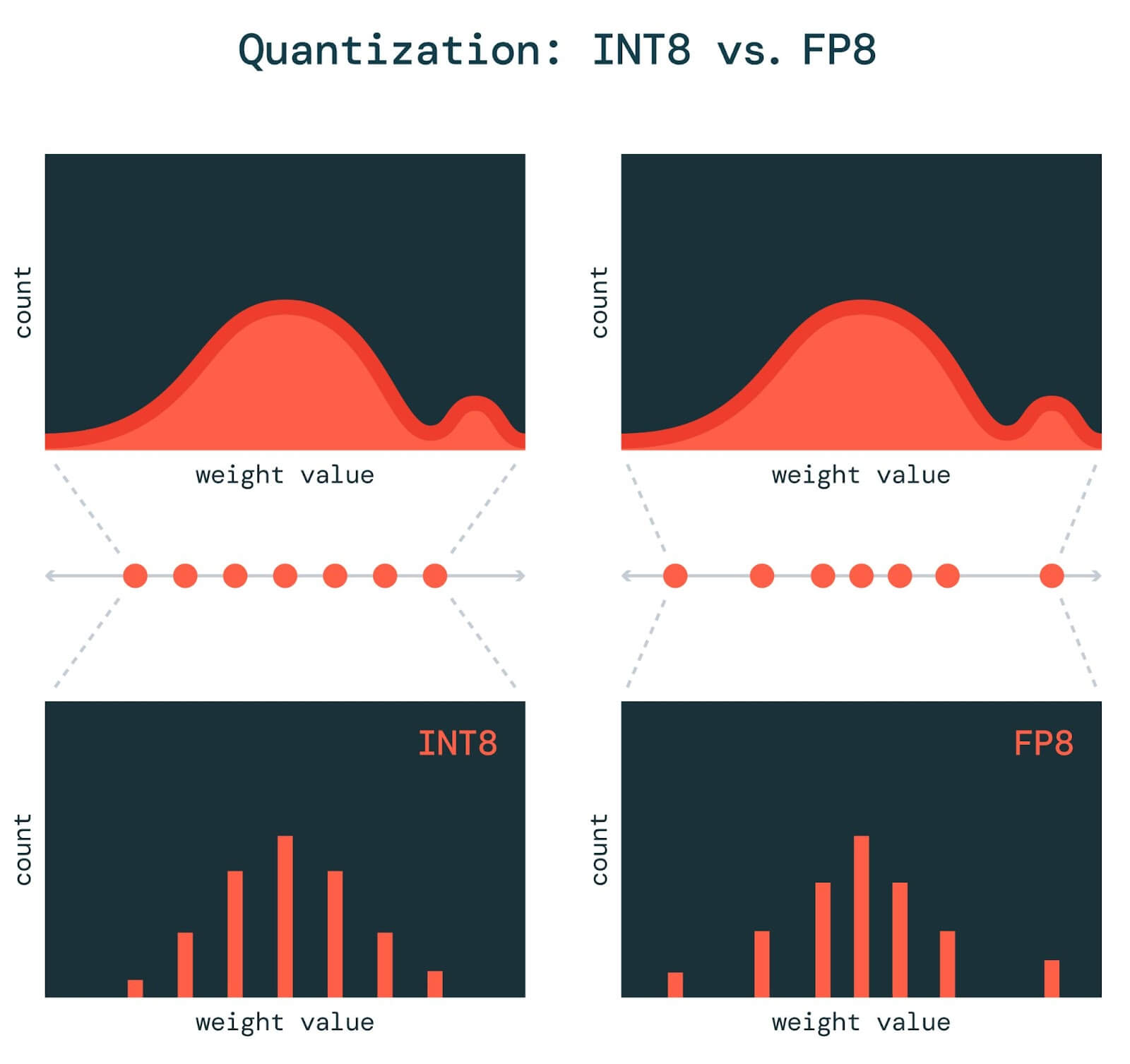

![[R] SmoothQuant: Accurate and Efficient Post-Training Quantization for ...](https://preview.redd.it/r-smoothquant-accurate-and-efficient-post-training-v0-fvv8kuu0id1a1.jpg?width=553&format=pjpg&auto=webp&s=309974871435584ee01297f4b4f8c85c26079d70)

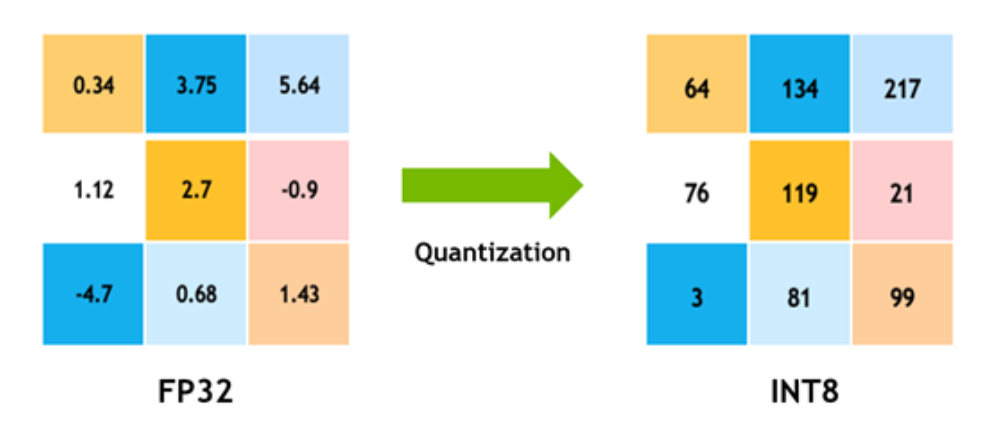

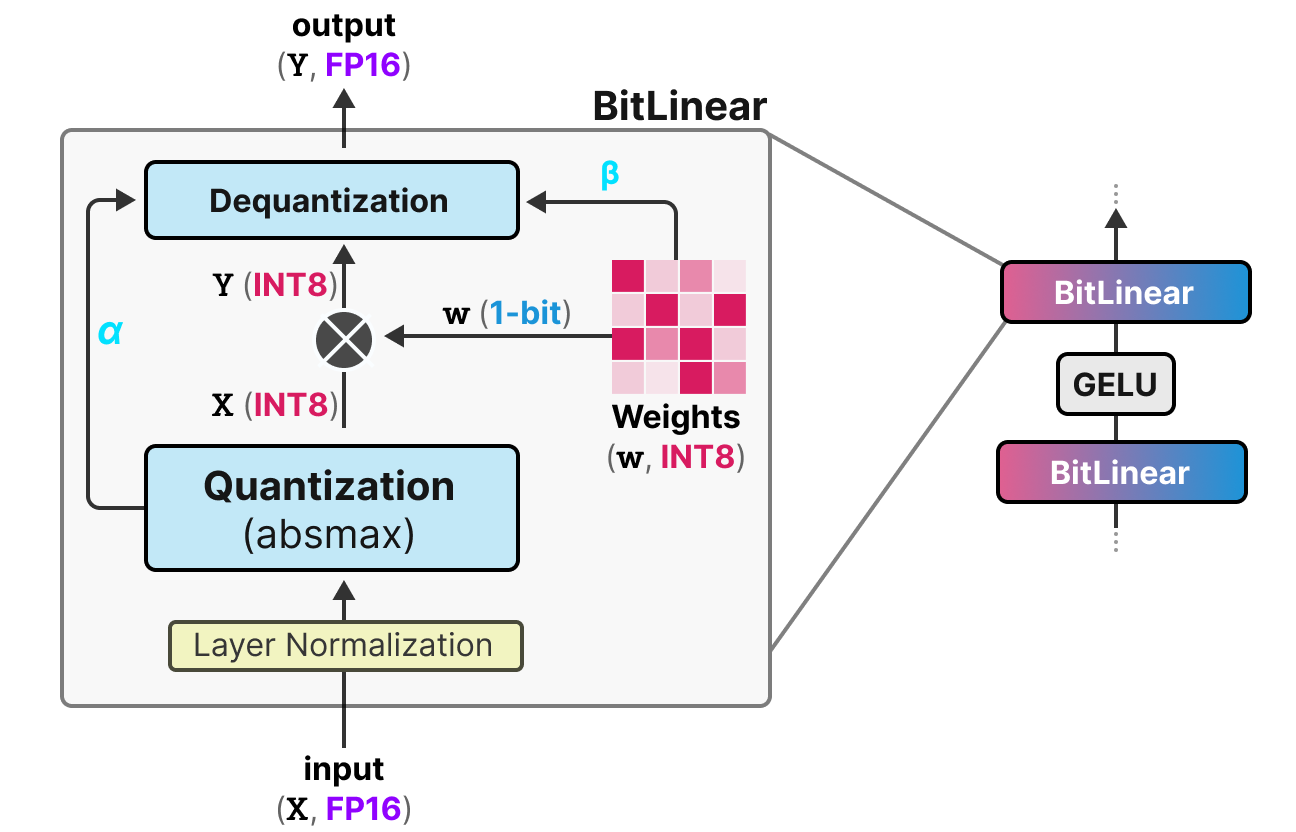

![[LLM] SmoothQuant: Accurate and Efficient Post-Training Quantization ...](https://velog.velcdn.com/images/delee12/post/fd5d357c-258c-4d24-8e31-c91fc7f18fc8/image.png)

![[LLM] SmoothQuant: Accurate and Efficient Post-Training Quantization ...](https://velog.velcdn.com/images/delee12/post/8815f09f-25e8-46c1-a637-1768348a3217/image.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*wZe1fGRYxEX4k14vHFDqSQ.png)

![[핵심][22.08]LLM.int8()](https://velog.velcdn.com/images/yeomjinseop/post/86038bae-e82e-4b9f-939b-14804fbadd1c/image.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*fHzXvjWrEwKn5ehfjJAzFg.png)

![[LLM量化] LLM.int8(), GPTQ, SmoothQuant, AWQ, SqueezeLLM, ATOM, OmniQuant ...](https://pica.zhimg.com/v2-0cc7a30f2000012020a937915c6c25f6_1440w.jpg)

![[LLM量化] LLM.int8(), GPTQ, SmoothQuant, AWQ, SqueezeLLM, ATOM, OmniQuant ...](https://pic1.zhimg.com/v2-ab7861def7c293cdb4cefd3f01292a18_r.jpg)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*vqdBLWnRTBZg8G1rOTV51Q.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/0*O8-1xmAZ-5lu6Bay.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*aH5tl-rnCLUckZaXL-GYFw.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*krNz89KIMMSJWuvkO4XrCg.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*Q8b86XBBCPRAGNRK8jrjgQ.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*vIVj6r_ndviap2Qpw45Nng.png)

![[vLLM — Quantization] bitsandbytes: 8-bit Optimizers, LLM.int8(), QLoRA ...](https://miro.medium.com/v2/resize:fit:1358/1*4HNx7zn0j1FBo9LTE31mQg.png)